Artificial Intelligence & Machine Learning

β (regressionCoecoefficient)

η (learningRate)

Artificial Intelligence & Machine Learning

γ (regularizationParameter)

μ (populationMean)

Artificial Intelligence & Machine Learning

σ (standardDeviation)

θ (modelParameters)

Artificial Intelligence & Machine Learning β (regressionCoecoefficient) η (learningRate) Artificial Intelligence & Machine Learning γ (regularizationParameter) μ (populationMean) Artificial Intelligence & Machine Learning σ (standardDeviation) θ (modelParameters)

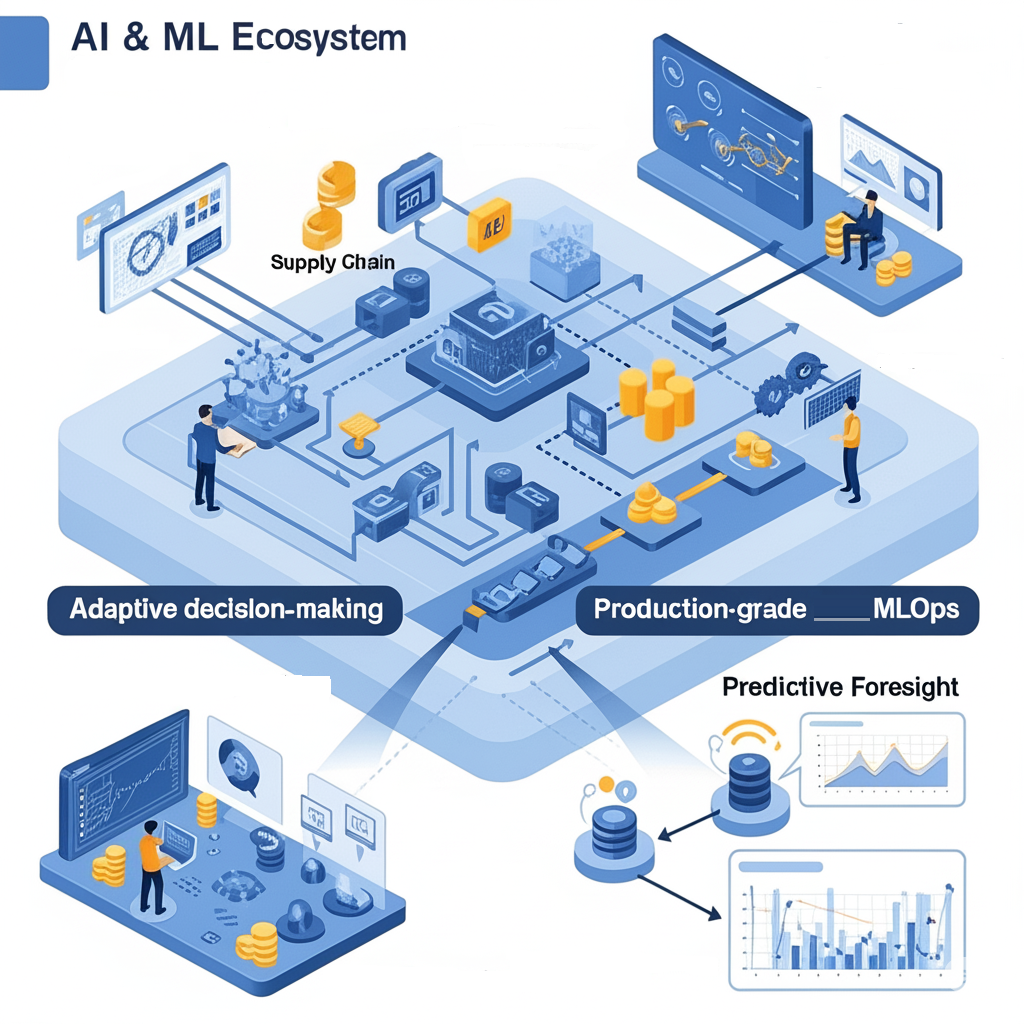

At Ternion Vergence, Artificial Intelligence and Machine Learning form the cognitive backbone of our digital engineering philosophy. We specialize in crafting bespoke AI/ML ecosystems that transcend traditional boundaries—integrating statistical rigor, domain-aware feature representations, and production-grade MLOps into cohesive, scalable, and interpretable frameworks.

Our capability extends beyond pre-packaged models or superficial automation. We embed intelligence at the core of systems—infusing adaptive decision-making into supply chains, embedding language understanding in enterprise communication pipelines, and orchestrating predictive foresight within dynamic data environments.

“I purpose to consider the question “Can machines actually think?””

-

We harness state-of-the-art Transformer-based architectures and integrate Retrieval-Augmented Generation (RAG) paradigms to build models that not only understand language but interact meaningfully with unstructured data in real time.

Applications:

Domain-specific LLM fine-tuning (e.g., legal, healthcare, retail)

Intelligent document parsing and semantic tagging

Cognitive search and enterprise Q&A bots

-

We specialize in probabilistic and hybrid deep-learning models for short, medium, and long-term forecasting under conditions of volatility and high dimensionality.

Solutions Include:

Hybrid SARIMA + LSTM architectures

Prophet and NeuralProphet ensembles

Vector AutoRegression (VAR) and Bayesian Structural Time Series (BSTS)

MLForecast + FeatureTools on hierarchical time series

-

From computer vision to sequential decision processes, we engineer intelligent systems using advanced neural architectures. These include:

CNNs for multi-modal image processing

Transformers for multimodal (text+image) inputs

GANs for synthetic data generation and anonymization

RNNs and LSTMs for pattern recognition in temporal data

Reinforcement Learning for autonomous behavior modeling

Tech Stack: PyTorch, TensorFlow, NVIDIA Triton Inference Server, HuggingFace, TorchServe, Weights & Biases.

-

We remain fluent in foundational machine learning paradigms that power structured data problems with exceptional efficiency.

Key Services:

XGBoost, LightGBM, CatBoost pipelines with hyperparameter tuning via Optuna

Feature selection with mutual information and permutation importance

Explainability using SHAP, LIME, and counterfactual diagnostics

Cross-validation strategies: time-series, group-aware, stratified K-fold

-

We engineer ML pipelines for reliability, reproducibility, and continuous improvement—ensuring your models do not just perform in the lab, but thrive in real-world environments.

Capabilities:

CI/CD pipelines with GitHub Actions, MLflow, and Dockerized training environments

Distributed training using SageMaker, Vertex AI Pipelines, or Azure ML SDK

Monitoring via Prometheus + Grafana or integrated model watchdogs (e.g., Fiddler, Arize)

Canary deployment and model A/B testing in production

Most consulting firms tend to stop their services at the stage of model development. We, however, take a more comprehensive approach at Ternion Vergence. We seamlessly embed advanced intelligence throughout the entire lifecycle of our solutions.

Domain-Infused Models: Our context-aware models are meticulously developed through the collaborative efforts of subject matter experts (SMEs) and data scientists, ensuring a deep understanding of the nuances of each domain.

Architecture-Agnostic Deployments: Regardless of whether you operate within a cloud-native framework or utilize hybrid infrastructure, we adapt our solutions to fit your specific environment—prioritizing your unique requirements rather than expecting you to conform to a rigid model.

Holistic MLOps Governance: Essential features such as model fairness, comprehensive versioning, detailed lineage tracking, and thorough compliance measures are integrated into our frameworks from the outset. These are inherent components of our design philosophy, rather than mere afterthoughts.

Research-to-Production Velocity: Our dedicated teams excel at rapidly prototyping innovative solutions, utilizing the most recent open-source tools and foundational models. We then enhance these prototypes to meet the rigorous standards required for enterprise-grade scalability.